Cache Replacement Policies

Cache Replacement Policies are essential techniques in computer systems that determine which data should be removed from cache memory when new data needs to be loaded. These policies improve system performance by efficiently managing limited cache space.

What are Cache Replacement Policies?

Cache replacement policies define the strategy used by a system to replace existing data in cache when it becomes full. These policies aim to maximize cache hit rate and minimize access time.

Below is the list of core concepts of cache replacement policies explained in detail.

1. Definition of Cache Replacement Policies

Cache replacement policies are algorithms used to decide which cache block should be replaced when new data arrives.

-

Limited Cache Space: Cache cannot store all data

-

Decision Making: Chooses which data to remove

-

Performance Optimization: Improves speed of memory access

-

Used in Systems: Found in CPUs, operating systems, and databases

2. Importance of Cache Replacement Policies

Cache replacement policies are important because they directly affect system efficiency and performance.

-

Improves Cache Hit Ratio: Keeps frequently used data

-

Reduces Memory Access Time: Faster data retrieval

-

Efficient Resource Use: Optimizes limited memory space

-

Enhances Overall Performance: Better system responsiveness

Types of Cache Replacement Policies

There are different types of cache replacement policies, each with its own strategy and use case.

Below is the list of major cache replacement policies explained in detail.

1. First In First Out (FIFO)

FIFO replaces the oldest data in the cache first, regardless of how frequently it is used.

-

Oldest Data Removed: First stored item is replaced first

-

Simple Implementation: Easy to understand and apply

-

No Usage Tracking: Does not consider frequency of access

-

May Be Inefficient: Frequently used data can be removed

2. Least Recently Used (LRU)

LRU replaces the data that has not been used for the longest period of time.

-

Based on Usage Time: Tracks recent access

-

Keeps Active Data: Frequently used data stays longer

-

Better Performance: Improves hit ratio compared to FIFO

-

Requires Tracking: Needs extra memory to track usage

3. Least Frequently Used (LFU)

LFU removes the data that is accessed the least number of times.

-

Frequency-Based: Tracks number of accesses

-

Removes Rare Data: Less used items are replaced

-

Efficient in Stable Patterns: Works well when access patterns are consistent

-

Overhead: Requires counters for each cache entry

4. Random Replacement

Random replacement selects a random block to replace when cache is full.

-

Random Selection: No specific rule followed

-

Simple and Fast: No tracking required

-

Low Overhead: Minimal computation

-

Unpredictable Performance: May remove important data

5. Most Recently Used (MRU)

MRU removes the most recently accessed data first.

-

Removes Latest Data: Opposite of LRU

-

Useful in Some Cases: Works when recent data is less likely to be reused

-

Less Common: Not widely used compared to LRU

-

Special Use Cases: Suitable for specific workloads

How Cache Replacement Policies Work

Cache replacement policies work by analyzing data usage patterns and selecting which data should be removed.

Below is the list of working steps of cache replacement policies.

1. Cache Hit

A cache hit occurs when requested data is found in the cache.

-

Fast Access: Data is quickly retrieved

-

No Replacement Needed: Cache remains unchanged

-

Improves Performance: Reduces memory access time

2. Cache Miss

A cache miss occurs when requested data is not found in the cache.

-

Slow Access: Data fetched from main memory

-

Replacement Needed: Cache block must be replaced

-

Policy Applied: Replacement policy decides removal

3. Replacement Decision

The system uses a replacement policy to select which data to remove.

-

Algorithm Applied: FIFO, LRU, LFU, etc.

-

Target Block Selected: Based on policy rules

-

New Data Stored: Cache is updated with new data

Comparison of Cache Replacement Policies

Different cache replacement policies have different strengths and weaknesses.

Below is the comparison table for better understanding.

| Policy | Strategy | Advantages | Disadvantages | Use Case |

|---|---|---|---|---|

| FIFO | Remove oldest | Simple and fast | Ignores usage | Basic systems |

| LRU | Remove least recently used | High hit ratio | Needs tracking | General systems |

| LFU | Remove least frequently used | Good for stable patterns | Complex implementation | Data analysis systems |

| Random | Remove random item | Very simple | Unpredictable | Low-cost systems |

| MRU | Remove most recently used | Useful in special cases | Rarely used | Specific workloads |

Example of Cache Replacement Policies

Understanding cache replacement policies becomes easier with examples.

1. FIFO

The First-in-First-out (FIFO) algorithm replaces the oldest block stored in the cache.

Note: If there are 4 positions in the cache when the 5th block comes into the cache, then the element of position 1 is replaced in any case.

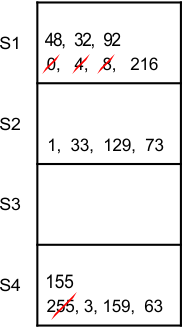

Question 1: Consider a 4-way set associative cache with a total of 16 cache blocks. Main memory block requests are

0,255,1,4,3,8,133,159,216,129,63,8,48,32,73,92,155

As 4 blocks can reside in each line at a time. So, the solution is given in the following diagram

Note: A block at position 1 in any cache line will be replaced after the line is full. If the same block comes again, it will be a cache hit. It does not change the block position from 1 to any else.

2. LRU

Replace the cache block, which was not used for the longest period of time in the past.

Question:1

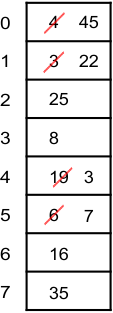

Consider a fully associative cache with 8 cache blocks (0-7) and the following sequence of memory block requests: 4, 3, 25, 8, 19, 6, 25, 8, 16, 35, 45, 22, 8, 3, 16, 25 and 7. By using LRU, find the position of memory block 7 in the cache.

Solution: At point 45, the 8 blocks of cache are filled, and no free space is available to accommodate a new block in the cache. So we check back the reference string at point 45 and replace the least recently used block in the cache, which is 4 in the example. And so on, the next incoming block is 22, which will be replaced with block no. 3.

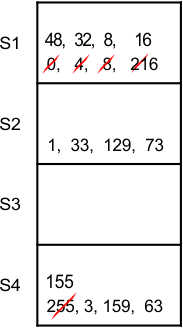

Question: 2

Consider a 4-way set associative cache mapping with 16 cache blocks. Main memory block requests are 0,255,1,4,3,8,133,159,216,129,63,8,48,32,73,92,155,16.

Solution:

Note: 4 blocks can exist in any line at a time. So

Advantages of Cache Replacement Policies

Cache replacement policies provide several benefits in computer systems.

Below is the list of advantages of cache replacement policies.

1. Improved System Performance

Cache replacement policies help in improving overall system speed.

-

Faster Data Access: Frequently used data remains in cache

-

Reduced Latency: Less waiting time

-

Efficient Processing: Better CPU performance

2. Better Memory Utilization

They ensure optimal use of limited cache memory.

-

Smart Replacement: Removes unnecessary data

-

Maximizes Storage: Efficient use of cache space

-

Avoids Wastage: Keeps useful data longer

3. Adaptability to Workloads

Different policies can be used based on workload requirements.

-

Flexible Usage: Choose policy based on system needs

-

Custom Optimization: Adjust according to usage patterns

-

Wide Application: Used in many computing environments

Disadvantages of Cache Replacement Policies

Despite their advantages, cache replacement policies also have some limitations.

Below is the list of disadvantages of cache replacement policies.

1. Implementation Complexity

Some policies are difficult to implement.

-

LRU and LFU Complexity: Require tracking mechanisms

-

Hardware Cost: Additional memory needed

-

Difficult Design: Complex algorithms

2. Overhead Cost

Tracking usage patterns adds overhead.

-

Extra Computation: Time needed to track usage

-

Memory Overhead: Counters and timestamps

-

Performance Impact: May slow down system slightly

3. Not Always Optimal

No single policy works best for all situations.

-

Workload Dependency: Performance varies

-

Unpredictable Patterns: Some policies fail

-

Trade-offs Required: Balance between speed and accuracy

Real-World Applications of Cache Replacement Policies

Cache replacement policies are widely used in modern computing systems.

Below is the list of real-world applications.

1. CPU Cache Management

Processors use cache replacement policies to manage L1, L2, and L3 cache.

-

Fast Execution: Keeps frequently used instructions

-

Efficient Processing: Improves CPU speed

-

Smart Replacement: Reduces memory access time

2. Operating Systems

Operating systems use replacement policies for memory management.

-

Page Replacement: Similar concept used in virtual memory

-

Efficient RAM Usage: Improves multitasking

-

System Stability: Reduces memory conflicts

3. Database Systems

Databases use cache replacement policies to manage query results.

-

Faster Queries: Frequently accessed data stays in memory

-

Improved Performance: Reduces disk access

-

Better User Experience: Quick data retrieval

Conclusion

Cache Replacement Policies play a critical role in improving system performance by efficiently managing cache memory. By selecting the right replacement strategy such as FIFO, LRU, or LFU, systems can achieve faster data access, better memory utilization, and enhanced overall performance. Understanding these policies is essential for students and developers working in computer architecture, operating systems, and database systems.